Phantom

Building a tool so that the best ideas stop dying in bad presentations.

Role

Founder & Designer-Engineer

Timeline

Feb 2025 – Present

Stack

React, TypeScript, Claude Code

Outcome

Live product, 98 components, 40+ layouts

01 / The world

The best work dies in the room it was presented in.

The Researcher

Ran an exhaustive 200-person study, but buried the critical insight on slide 47.

The PM

Had the data to kill a failing feature, but couldn't structure the argument to convince leadership.

The Designer

Nailed the perfect solution to the exact problem, but couldn't get buy-in because the deck didn't make the case.

The problem is never the idea. It's the structure. What to lead with, what to cut, what story the data tells. Nobody teaches this.

So I spent years learning what makes an argument land.

Situation, Complication, Resolution. Every great presentation is a story. Set the world, break it, show the way out.

Mutually Exclusive, Collectively Exhaustive. If your categories overlap or miss something, your audience finds the gap before you do.

Lead with the answer. Nobody waits for your buildup. Say what you mean, then prove it.

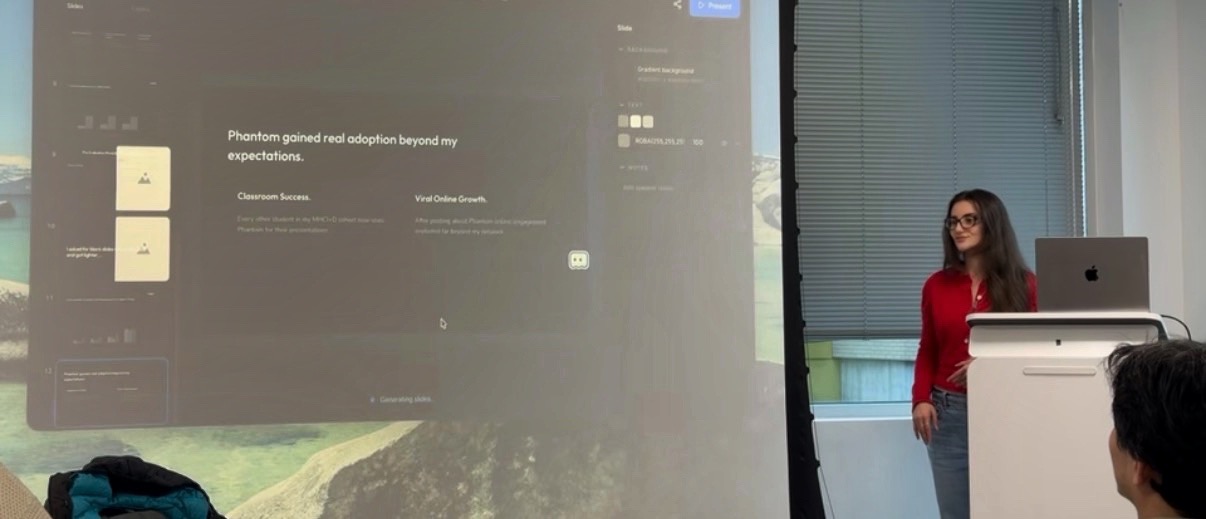

I studied the frameworks, practiced until I could win competitions, and designed every slide of the deck that took 1st place at FigBuild 2026. I know what makes an argument land. And I know most people never get the chance to learn it.

Yet every AI presentation tool on the market disregards this.

When the output is bad, they blame the user.

Gamma, Tome, Beautiful.ai. The user has to learn how to talk to the machine. That's not an AI problem. That's a design problem.

I wanted to build the tool that understands the user. Not the other way around.

02 / The fight

I poured weeks into building the tool I wished existed.

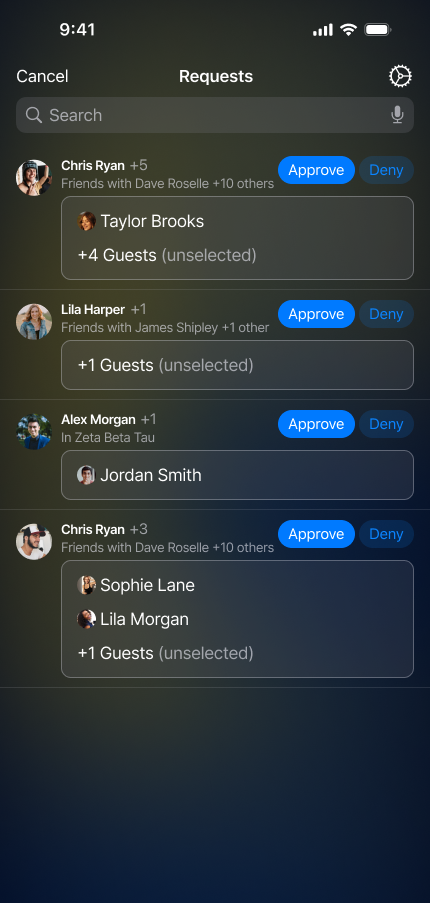

A single AI agent that sees everything at once: structure, data, design. I gave it a character — a ghost that lives on the canvas with you. I fed it every framework I knew. 98 React components. 40+ layout presets.

The editor

Then I sat next to my first user and watched her present data she didn't know was fake.

A classmate from my cohort uploaded her real study data. The AI generated polished slides with clean charts and clear hierarchy. Everything looked exactly right.

“Wait. That's not my number.

Where did 73% come from?”

User Research Report

Q3 Adoption Metrics

Overall Response Rate

We observed a record-breaking response rate across the target cohort, significantly outperforming H1 expectations.

Sample Split

This was everything I built Phantom to be against.

She would have presented those numbers to her team. The design was so polished that neither of us questioned the content.

I never would have known.

I started to wonder if AI just wasn't there yet. I was ready to give up.

I understood why no other company was doing this right. Maybe they knew something I didn't. Maybe the technology wasn't ready for what I was trying to build.

Then I demoed it to a room full of people. It wasn't perfect. They wanted it anyway.

The hallucination problem was still there. But when the slides generated live on screen, the room went quiet. Then the questions started. Then everyone wanted the link. They could see it was solving a real problem that nothing else was touching.

I couldn't make AI stop hallucinating. But I could make it stop hiding.

03 / The outcome

So I redesigned everything. Not around beauty. Around honesty.

I went back to the agents. Unwrangled them. Rebuilt the pipeline so real data flows through the system without the AI touching it. Taught the ghost to flag its own uncertainty instead of hiding it. Every wall I hit, I found the problem behind it and kept going.

Before

The AI invented numbers and nobody caught it.

After

Now the AI can't touch the numbers. Real values flow from the user's file. Architecturally impossible to lie.

Before

The output looked confident even when it was wrong.

After

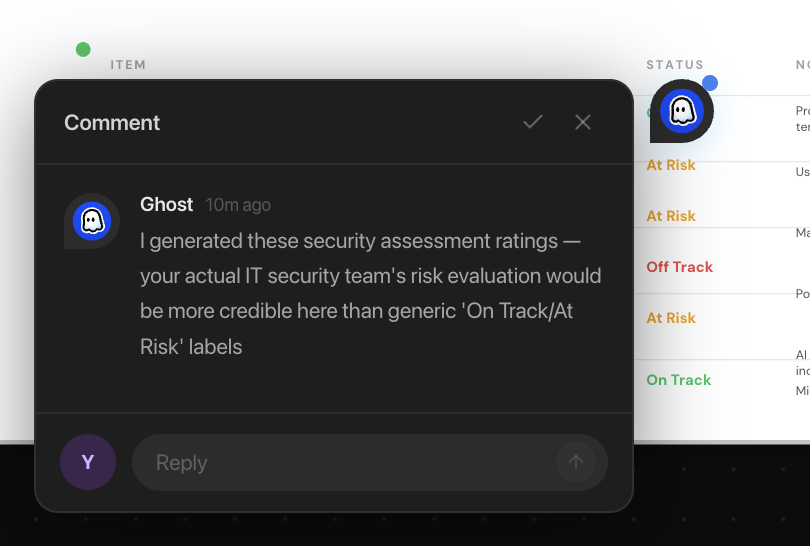

Now the ghost flags what it made up. It says: your actual data would be more credible here.

Before

The user presented an argument they didn't build.

After

Now the user stays in the argument. The ghost suggests. You decide. You can change anything it produces.

Now the ghost doesn't generate your argument. It helps you find it.

I knew what real collaboration felt like because I'd been doing it by hand.

Before Phantom, the CEO would bring me his investor pitch the night before a meeting. The argument was there but the structure was wrong. He'd lead with the product when investors cared about the market. He'd bury the traction slide behind three slides of roadmap.

I'd rebuild the narrative, redesign the visuals, and he'd walk in the next morning presenting something he believed in. Because it was still his argument. I just helped him see the story in it. That's what I designed the ghost to be.

I shipped it. The entire product. By myself.

React, TypeScript, Claude API. 98 components. 40+ layout presets. A drag-and-drop canvas editor with a full AI generation pipeline. Live at phantomslides.com.

I started as a designer who thought the problem was ugly slides. I became a designer who designs how AI and humans think together.